From IP Address to Intelligent Gateway#

In Chapter 1, we laid the foundational pillar by solving the bare-metal IP address problem with MetalLB. Our test NGINX service successfully acquired the IP 10.20.0.90, proving our cluster can now serve traffic like its cloud-native counterparts.

But an IP address alone is just a raw entry point. In any production-grade environment, you need an intelligent gateway to inspect traffic, route requests based on hostnames, and manage security. This is where an Ingress Controller, or in our case, a modern Gateway, comes into play.

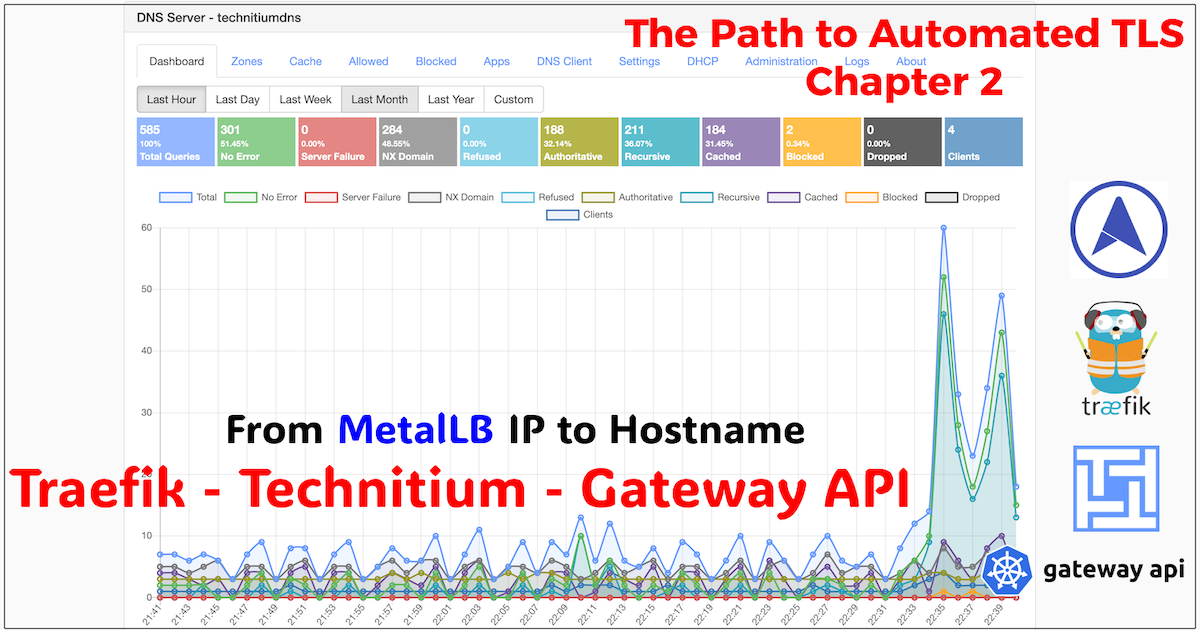

Welcome to Chapter 2. With a stable IP address from MetalLB, we will now deploy Traefik Proxy to act as our application gateway (or L7 router). To make our services accessible by name, we’ll also establish an internal DNS backbone with Technitium DNS Server. This combination will transform our raw IP into a fully-featured, name-based routing system.

Traefik and the Rise of the Gateway API#

For years, Ingress was the standard way to expose HTTP/S services in Kubernetes. However, it was plagued by limitations: a fragmented feature set dependent on annotations, and a lack of standardization across controllers.

The Gateway API is the official evolution of Ingress. It provides a standardized, role-oriented, and expressive API for managing traffic. Instead of one monolithic Ingress object, it splits the responsibility:

- GatewayClass: Defines a template for Gateways (e.g.,

traefik). - Gateway: A request for a load balancer, managed by the infrastructure team. This binds to a specific IP (hello, MetalLB!) and defines listeners (e.g., port 443).

- HTTPRoute: Application-level routing rules, managed by developers. It defines how requests for a specific hostname are forwarded to backend services.

This separation of concerns is a core tenet of enterprise GitOps, and it’s why we’re choosing the Gateway API for our homelab.

DNS Deep Dive: Technitium as the Internal Backbone#

Before we can route traffic by hostname, we need a DNS server that can resolve those names to an IP address. For a production-grade homelab, this means creating our own private DNS zones.

Technitium DNS Server is a powerful, self-hosted DNS server packed with features typically found in enterprise products. For our homelab, it serves a critical purpose: acting as the authoritative DNS server for our internal domains.

A Note on Homelab Evolution: From Pi-hole to Technitium#

Readers who have followed my blog from the beginning, particularly in my post “From Enterprise to Homelab: Transforming My Home Network”, might recall that I originally planned to use Pi-hole for DNS filtering. While Pi-hole is an excellent tool, my homelab is a constantly evolving platform. As I standardized my tooling, I decided to migrate to Technitium DNS Server. It offers advanced features like split-horizon DNS and conditional forwarding, which align better with my long-term goals. This is a natural part of the homelab process: you start with one tool, learn, and adapt as your requirements become more sophisticated.

The dev.thebestpractice.tech Zone#

In my public DNS, I own the domain thebestpractice.tech. To create a safe, isolated space for my homelab experiments, I will create a new subdomain exclusively for this environment: dev.thebestpractice.tech.

Inside Technitium DNS Server, I will create a new Primary Zone for dev.thebestpractice.tech. For now, this zone is completely private and authoritative only within my local network.

The next step is to create an A record that points all traffic for this domain to the IP address of our Traefik gateway. After we deploy Traefik, its LoadBalancer service will receive the IP 10.20.0.90 from MetalLB.

Now, any request for *.dev.thebestpractice.tech will resolve to 10.20.0.90, directing all traffic for our development environment to our Traefik gateway.

A Note on Wildcard DNS in Production#

Using a wildcard record is a fantastic shortcut for a homelab or development environment. It means we don’t have to create a new DNS entry every time we deploy a new service.

However, in a true production environment, this is not a recommended practice. Production systems favor explicit, specific DNS records for each service (

argo.dev.thebestpractice.tech,grafana.dev.thebestpractice.tech, etc.) for tighter security and control.We will address this “production gap” in a future blog post, where we’ll integrate a tool like ExternalDNS. It will watch our Kubernetes cluster and automatically create precise DNS records for each

HTTPRoutewe create. For now, the wildcard is the perfect accelerator for our private setup.

GitOps Implementation: Deploying Traefik with ArgoCD#

Following the same enterprise GitOps pattern from Chapter 1, Traefik is deployed via a multi-layered ArgoCD configuration. This ensures our gateway is declarative, version-controlled, and consistent with the rest of our platform.

Directory Structure#

The directory structure for Traefik mirrors the one we used for MetalLB, maintaining a clean separation between base configuration and environment-specific overrides.

.

├── base

│ ├── ingress

│ │ ├── metallb

│ │ │ └── ...

│ │ └── traefik

│ │ ├── traefik.yaml

│ │ └── values.yaml

├── environments

│ ├── dev

│ │ ├── ingress

│ │ │ ├── traefik

│ │ │ │ ├── custom-values

│ │ │ │ │ └── override.values.yaml

│ │ │ │ └── root-traefik.yaml

│ │ │ └── routes

│ │ │ └── http-routes

│ │ │ └── argocd.yaml

└── ...1. The Base Application Manifest#

The base manifest defines the core ArgoCD Application for Traefik. Just like with MetalLB, it uses a multi-source pattern: one source points to the official Traefik Helm chart, and the other points to our own Git repository to fetch the values.yaml files.

base/ingress/traefik/traefik.yaml:

apiVersion: argoproj.io/v1alpha1

kind: Application

metadata:

name: traefik

namespace: argocd

finalizers:

- resources-finalizer.argocd.argoproj.io

spec:

destination:

namespace: traefik

server: https://kubernetes.default.svc

project: argo-config

sources:

- repoURL: https://github.com/anvaplus/homelab-k8s-argo-config.git

targetRevision: main

ref: valuesRepo

- repoURL: https://traefik.github.io/charts

chart: traefik

targetRevision: 39.0.0

helm:

releaseName: traefik

valueFiles:

- $valuesRepo/base/ingress/traefik/values.yaml

syncPolicy:

automated:

prune: true

selfHeal: true2. Base and Environment-Specific Helm Values#

Our values are split. The base/ingress/traefik/values.yaml is kept minimal, containing only chart defaults or universal settings.

The real configuration happens in the environment overlay file, environments/dev/ingress/traefik/custom-values/override.values.yaml. This is where we enable the Gateway API and tell Traefik to request a LoadBalancer service.

environments/dev/ingress/traefik/custom-values/override.values.yaml:

providers:

kubernetesCRD:

enabled: false # Disable legacy CRD provider

kubernetesIngress:

enabled: false # Disable legacy Ingress provider

kubernetesGateway:

enabled: true # Enable the new Gateway API provider

# This tells Traefik to create a service of type LoadBalancer.

service:

type: LoadBalancer

# We explicitly request the IP from our pool

spec:

loadBalancerIP: 10.20.0.90

# -- Traefik Gateway configuration

# Change listeners namespace policy to all namespaces

gateway:

enabled: true

listeners:

web:

port: 8000

protocol: HTTP

namespacePolicy:

from: AllNotice we explicitly set loadBalancerIP: 10.20.0.90. This ensures Traefik gets the specific, predictable IP address we’ve allocated for it, which is essential for our DNS configuration to work.

3. Deploying a Test Application#

With Traefik deployed, the next step is to expose an application. To maintain consistency, we’ll use the same NGINX application from Chapter 1, but with one critical difference.

Since Traefik now manages external access via its own LoadBalancer service, our application services no longer need to be of type LoadBalancer. They can be standard, internal ClusterIP services. Traefik will route traffic to them internally.

For quick verification, I’ll apply this manifest directly with kubectl.

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-test-deployment

namespace: default

spec:

replicas: 1

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- image: nginx

name: nginx

---

apiVersion: v1

kind: Service

metadata:

name: nginx-test-service

namespace: default

spec:

selector:

app: nginx

ports:

- protocol: TCP

port: 80

targetPort: 80

type: ClusterIP # Note: No longer a LoadBalancer!4. Exposing the Service with an HTTPRoute#

Now we create an HTTPRoute resource to tell our gateway how to route traffic to our new nginx-test-service. This manifest instructs the traefik gateway to listen for requests for test.dev.thebestpractice.tech and forward them to our NGINX service.

kind: HTTPRoute

apiVersion: gateway.networking.k8s.io/v1beta1

metadata:

name: nginx-test-route

namespace: default

spec:

parentRefs:

- kind: Gateway

name: traefik

namespace: traefik

hostnames: ["test.dev.thebestpractice.tech"]

rules:

- matches:

- path:

type: PathPrefix

value: /

backendRefs:

- name: nginx-test-service

kind: Service

port: 805. Verification: End-to-End Traffic Flow#

After the HTTPRoute is deployed, the entire flow is complete. Let’s test it:

- A client makes a request to

http://test.dev.thebestpractice.tech. - The request hits our Technitium DNS server.

- Technitium resolves the name to

10.20.0.90because of our wildcardArecord. - The request is sent to the Traefik

LoadBalancerservice at that IP. - Traefik inspects the request’s

Hostheader (test.dev.thebestpractice.tech). - It matches the

HTTPRouterule and forwards the traffic to thenginx-test-service.

To confirm our success, we can open a web browser on a machine that uses Technitium for DNS resolution and navigate to http://test.dev.thebestpractice.tech. The result is the default NGINX welcome page, served through our new gateway.

Success! We have established a complete, name-based routing system, from DNS to gateway to service.

Conclusion: The Gateway is Open#

We now have an intelligent entry point into our cluster. MetalLB provides the stable IP, and Traefik’s Gateway routes traffic based on hostnames. Inside our network, our internal Technitium DNS server resolves hostnames in the dev.thebestpractice.tech zone to Traefik’s private IP, completing the internal traffic loop. This setup mirrors the L4/L7 load balancing and service discovery patterns of a real enterprise cloud.

But there’s one critical piece missing: automated TLS. Our gateway is ready, but it’s not yet terminating encrypted traffic.

In the Chapter 3, we will tackle this by implementing a sophisticated split-horizon DNS strategy. We will use the same dev.thebestpractice.tech zone in two places:

- Publicly, to perform DNS-01 challenges with Let’s Encrypt.

- Internally, with Technitium, for local resolution.

We will instruct Cert-Manager to use the public DNS provider for its challenges, even though the cluster itself uses Technitium for DNS. This allows us to get publicly trusted certificates for our private services, achieving a true production-grade setup.

Stay tuned! Andrei