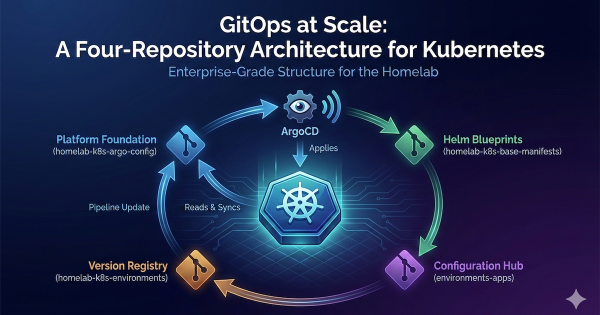

In my last post, The Four-Repo GitOps Structure for My Homelab Platform, I laid out the architectural blueprint for managing my homelab like a production environment. Building on the automation I detailed in my popular post, Need for Speed: Automating Proxmox K8s Clusters with Talos Omni, we now have a cluster ready for a production-grade CNI. Now that we have a solid GitOps foundation and a running Talos Kubernetes cluster, it’s time to address a critical component: networking.

The Journey So Far # In this series, we’ve built a powerful foundation for a homelab Kubernetes platform. We started by installing Talos Omni to get a centralized management plane. Then, we walked the “scenic route” by manually provisioning a cluster to understand the nuts and bolts. Finally, we achieved true velocity by automating cluster creation, turning our Kubernetes infrastructure into a disposable, on-demand resource.

In my previous posts, I walked through installing Talos Omni and then manually provisioning a Talos Kubernetes cluster on Proxmox. Both were essential learning experiences. Getting Talos Omni running was a huge win, and understanding the manual provisioning process… from downloading the ISO, creating VMs, configuring static IPs in the console, and patching nodes… built a strong foundation. But the real game-changer wasn’t just running Kubernetes… it was discovering how quickly I could create it.

In the world of platform engineering, our goal is always to automate everything. But before we can appreciate the elegance of a fully automated workflow, it’s incredibly valuable to walk through the manual process at least once. It builds a deep understanding of what’s happening under the hood.

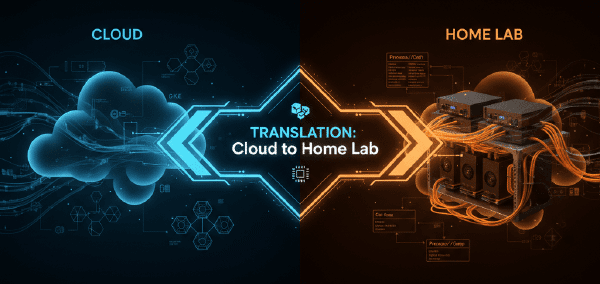

In the world of enterprise cloud, managing Kubernetes clusters with services like GKE, EKS, or AKS is standard practice. These platforms offer incredible power but come with a learning curve and, more importantly, a cost that’s hard to justify for a homelab. As a platform engineer, I’m used to building and managing production-grade infrastructure, but as I explained in my first post, Why not a homelab?, I wanted a solution for my homelab that offered a similar centralized management experience without the overhead.

This is Part 2. If you need the cloud-to-homelab translation and requirement framing, read Part 1: From Cloud Sizing to Requirements first.

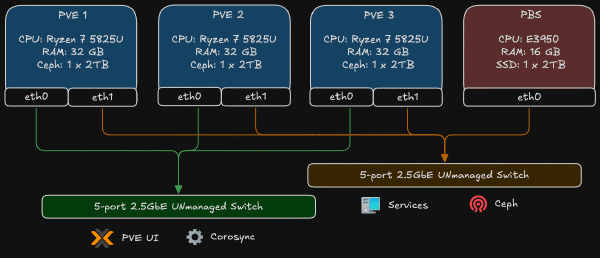

When you architect a Kubernetes cluster, you don’t think about heat dissipation or power consumption. You think in abstractions: N2 instances, vCPUs, memory tiers. Click, deploy, bill. The infrastructure vanishes behind APIs and Terraform declarations. But the moment you decide to build that same cluster in your homelab, those abstractions collapse into very real decisions: which CPU, how much RAM, what kind of storage, and critically, how much will this cost me in electricity every month?

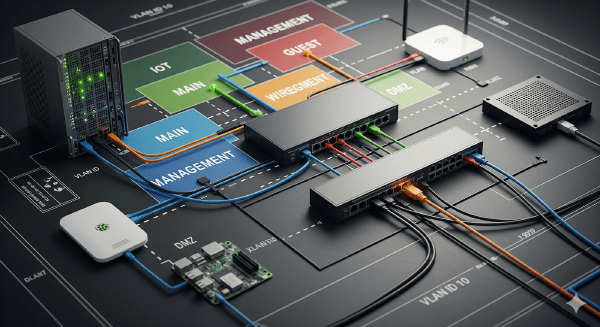

I love diagrams, but diagrams don’t wire cables for me. In this post I will show the physical mapping, the Proxmox bridge pattern I used, the OPNsense management model, and the first firewall policy I used to protect the lab. The network was already in place; below I explain what I did to build and secure it.

Hey there! In my last post, I shared why I’m starting this homelab journey. Today I’m taking it a step further: I’m rebuilding my home network from a simple, flat LAN into a segmented, security‑first setup … very similar to how Google Cloud designs hub‑and‑spoke networks. If you’re new here, you might want to start with my introduction: Why not a homelab?

Hey there! If you’re reading this, you’re about to embark on an adventure with me that I never thought I’d start.